Insights

From strategy to implemented data governance framework

Once you have worked out a strategy to ensure the data integrity of your products, it is time to convert the strategy into a practical data governance framework and implement it.

How do you go from a strategy to an implemented data governance framework?

Nathalie: Normally you have already taken the first step during the preparation of your strategy. Researching all existing SOPs and current practices within your company. You can benchmark your results against the industry best practices and regulations you need to comply with. This is the starting point from which you can proceed.

Review all current procedures and practices

Talk to your employees: how do the systems, devices or applications work, what data do they generate, what is the input, what is the output, ask for manuals, what are the current procedures and processes, check your employees’ knowledge of data integrity, see if there are any data risks, etc. Involving all operational colleagues from the start leads to greater commitment.

Determine the data domains

The next step is to determine the data domains applicable within your industry. This can differ from sector to sector e.g. customer or product data domains. Within these data domains, are data elements such as systems and applications that generate important reports and essential data or describe business methods. In doing so, you look for what is critical and important to your business.

Inventory all systems & devices

Inventory all devices and systems that generate data, assign a data domain and categorize according to the GAMP classification or similar (sufficient for an audit!) and describe why you choose that category.

What is the GAMP classification?

Nathalie: The GAMP guidelines (ISPE, good automated manufacturing practices) are guidelines for validating automation systems. They help you draw up the documents needed to validate the systems and to guarantee the quality of these systems. The latest version is the GAMP-5 which focuses on risk control and better quality management. These GAMP 5 guidelines describe the boundaries of the categories into which all systems can be categorized.

- Category 1: Infrastructure software: these are usually operating systems on which applications are installed (Windows, Linux, custom, …). These operating systems are qualified but not validated, it is the applications that are validated.

- Category 2: Standard software that is not configurable: also called ‘off-the-shelf-software’. This software can be easily installed and does not need to be adapted to your business needs. This category also includes software that can be configured but has a standard configuration in your company.

- Category 3: Configurable software: these are software applications that are configured according to your specific business needs. This is the largest and most complex category to validate.

- Category 4: Tailor-made software: these are software packages written from scratch especially for your company.

The choice for a GAMP classification or similar categories depends on the industry and the devices & systems in your company. The GAMP-guidelines apply to the pharmaceutical sector. If you work within a non-pharmaceutical sector, you are not required to use the GAMP categories. Then you can also rely on the USP classification:

- standard equipment with no measurement capability or usual requirement for calibration, where the manufacturer’s specification of basic functionality is accepted as user requirements. Conformance of Group A equipment with user requirements may be verified and documented through visual observation of its operation. E.g. a simple pipette.

-

standard equipment and instruments providing measured values as well as equipment controlling physical parameters (such as temperature, pressure, or flow) that need calibration, where the user requirements are typically the same as the manufacturer’s specification of functionality and operational limits. The conformance of Group B instruments or equipment to user requirements is determined according to the standard operating procedures for the instrument or equipment and documented during IQ and OQ. Examples of equipment in this group are melting point apparatus, pH meters, thermometers,…

- Group C includes instruments and computerized analytical systems, where user requirements for functionality, operational, and performance limits are specified for the analytical application. The conformance of Group C instruments to user requirements is determined by specific function tests and performance tests. Installing these instruments can be a complicated undertaking and may require the assistance of specialists. A full qualification process, as outlined in this document, should apply to these instruments. Examples of instruments in this group include the following: high-pressure liquid chromatographs, mass spectrometers,…

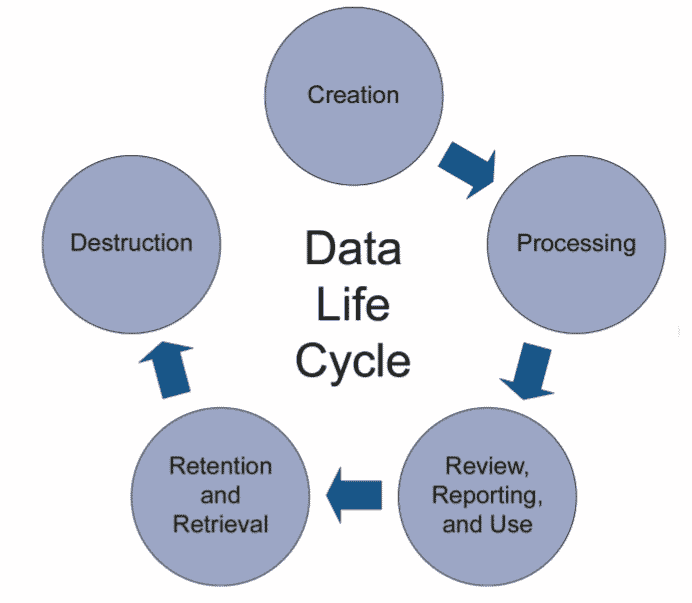

Mapping the data process flow

Mapping the data process flow is part of good data lifecycle management. All phases (5) of the data lifecycle, from initial data creation, capture & registration to processing including transformation or migration, review, reporting & use, retention & retrieval, and destruction, must be controlled and managed to ensure accurate, reliable and compliant records and data.

The data process flow is a visual representation of how data flows through the processes and systems of the company (input, output, storage points and routes between two ‘destinations’). This flow must be documented in a ‘data audit trail‘, a log file of everything that happens and may affect the final product (as determined by the risk assessment).

Need help with implementing your data governance framework?

Our team of Data Integrity Experts is happy to help.

Create a data integrity questionnaire

A questionnaire can vary between 50 and 100 questions. Keep in mind the commitment of your company and the type of equipment when drawing up the questionnaire, e.g. you cannot make the same demands on a computer as on a thermometer. The security of the computer must be much better and the data output is different. Make sure your questions cover the full scope!

The FDA prepares an annual paper with the minimum requirements to be followed. In addition, your questionnaire must comply with the ALCOA(+)-principle and it can be useful to take into account the interpretation or focus of the auditors. Place the focus wherever they do. It is not so bad if the questionnaire is not perfect to start with, you can make adjustments along the way.

Examples of questions:

- Attributable: is the source of the data defined? Is the data owner documented?

- Legible: is the data always accessible to the authorized persons? Is the data centrally stored & managed?

- Contemporaneous: is a timestamp registered?

- Original: is the original data stored? Are adjustments logged in the data audit trail?

- Accurate: is this the correct data (error-free)?

Perform the GAP assessment

Test each device, system or application against the questionnaire. Based on the answers you can determine where the GAPs lie between the current status and the desired status.

Perform a risk analysis

Assign a risk level to the GAPs based on these risk factors. Not every GAP is a point of action or issue. Based on the risk analysis you determine priorities for the remediation phase. Normally, low degree risks are acceptable, there is no obligation to take further action. It is up to your company to determine how strictly you handle data integrity.

Use a risk scale of 1-10 or 0-100 and take 3 factors into account:

- Degree of severity: Consider the worst possible consequence of failure due to the degree of injury, property damage, system damage and corporate reputation loss that could occur E.g. 1 (low) – 5 (very high).

- Occurrence: How many times did the GAP occur and what is the ‘probability’ that the GAP will occur, e.g. 0 (technically not possible to occur) – 4 (certainly possible).

- Detectability: also called ‘effectiveness’, is a numerical subjective estimation of the effectiveness of the controls to prevent or detect the cause or malfunction before the malfunction reaches the customer. E.g. 1 (will be detected during production) or 4 (there is no detection mechanism).

The remediation phase

Work out technical solutions to solve the risks, allocate resources, adjust data ownership if necessary and rewrite SOPs to eliminate GAPs. There are three types of controls: technical, procedural & behavioral. You should always start with the technical checks during the remediation phase. Determine short, mid and long term actions. These adjustments should lead to better control over the process, the GxP data or systems.

Mid-term checks for high data integrity risks can be:

- Additional data oversight

- A second witness of the data registration

- Checking the audit trail

- Restricted management of user access

- Adjusting operational procedures

Introduce procedural controls to establish general data integrity requirements:

- Archive

- GdocP

- Backup/recovery processes

Technical checks to ensure data security:

- Badge limited access

- Unique ID/password

- Audit trail

- User access management

In addition, electronic data is certainly more reliable and easier to check than paper-based data. Also, try to avoid a hybrid system of electronic data & paper documents. Switch to the digitization of data as much as possible.

Remediation actions must be defined, documented and followed up in accordance with the company’s CAPA and risk management procedures.

Follow-up phase

Once all solutions have been implemented, a new risk assessment will be necessary to ensure that the expected residual risks are acceptable. You perform this risk assessment on a regular basis to ensure that data integrity can be guaranteed and that you are prepared for data integrity audits.

A risk-based and pragmatic approach

In order to address the growing need of the industry to implement data integrity in a smart and efficient way, Pauwels Consulting has developed a unique data governance program. The program combines a thorough pre-assessment with subsequent transition according to the well-known PDCA cycle. GAP assessments and associated actions are carried out in parallel across each department with continuous feedback loops and include alignment of all DI-related documentation (e.g. URS, data handling procedures, etc…).

Our risk-based and pragmatic approach embeds strong leadership and proper behavioral management. It is designed to achieve and sustain DI cultural excellence across the entire organization. Proper training is crucial so our Data Integrity team has compiled a variety of training modules that can be tailored to achieve these goals, together!